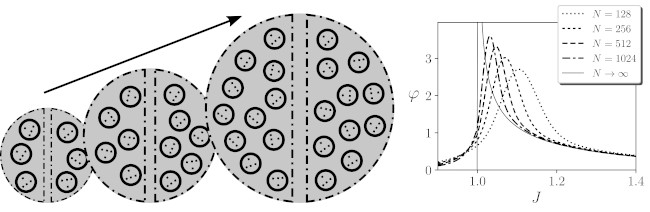

Together with Ezequiel Di Paolo, we have just published a new paper in which we explore how integrated information scales in very large systems. The capacity to integrate information is crucial for biological, neural and cognitive processes and it is regarded by Integrated Information Theory (IIT) proponents as a measure of conscious activity. In this paper we compute (analytically and numerically) the value of IIT measures (φ) for a family of Ising models of infinite size. This is exciting since it allows to explore situations that were very far to the kind of systems that can be generally analyzed in IIT, generally limited to a few units due to its computational cost. Moreover, our analysis allows us to connect features of integrated information with well-known features of critical phase transitions in statistical mechanics.

Aguilera, M & Di Paolo, EA (2019). Integrated information in the thermodynamic limit. Neural Networks, Volume 114, June 2019, Pages 136-146. doi:10.1016/j.neunet.2019.03.001

Abstract: The capacity to integrate information is a prominent feature of biological, neural, and cognitive processes. Integrated Information Theory (IIT) provides mathematical tools for quantifying the level of integration in a system, but its computational cost generally precludes applications beyond relatively small models. In consequence, it is not yet well understood how integration scales up with the size of a system or with different temporal scales of activity, nor how a system maintains integration as it interacts with its environment. After revising some assumptions of the theory, we show for the first time how modified measures of information integration scale when a neural network becomes very large. Using kinetic Ising models and mean-field approximations, we show that information integration diverges in the thermodynamic limit at certain critical points. Moreover, by comparing different divergent tendencies of blocks that make up a system at these critical points, we can use information integration to delimit the boundary between an integrated unit and its environment. Finally, we present a model that adaptively maintains its integration despite changes in its environment by generating a critical surface where its integrity is preserved. We argue that the exploration of integrated information for these limit cases helps in addressing a variety of poorly understood questions about the organization of biological, neural, and cognitive systems